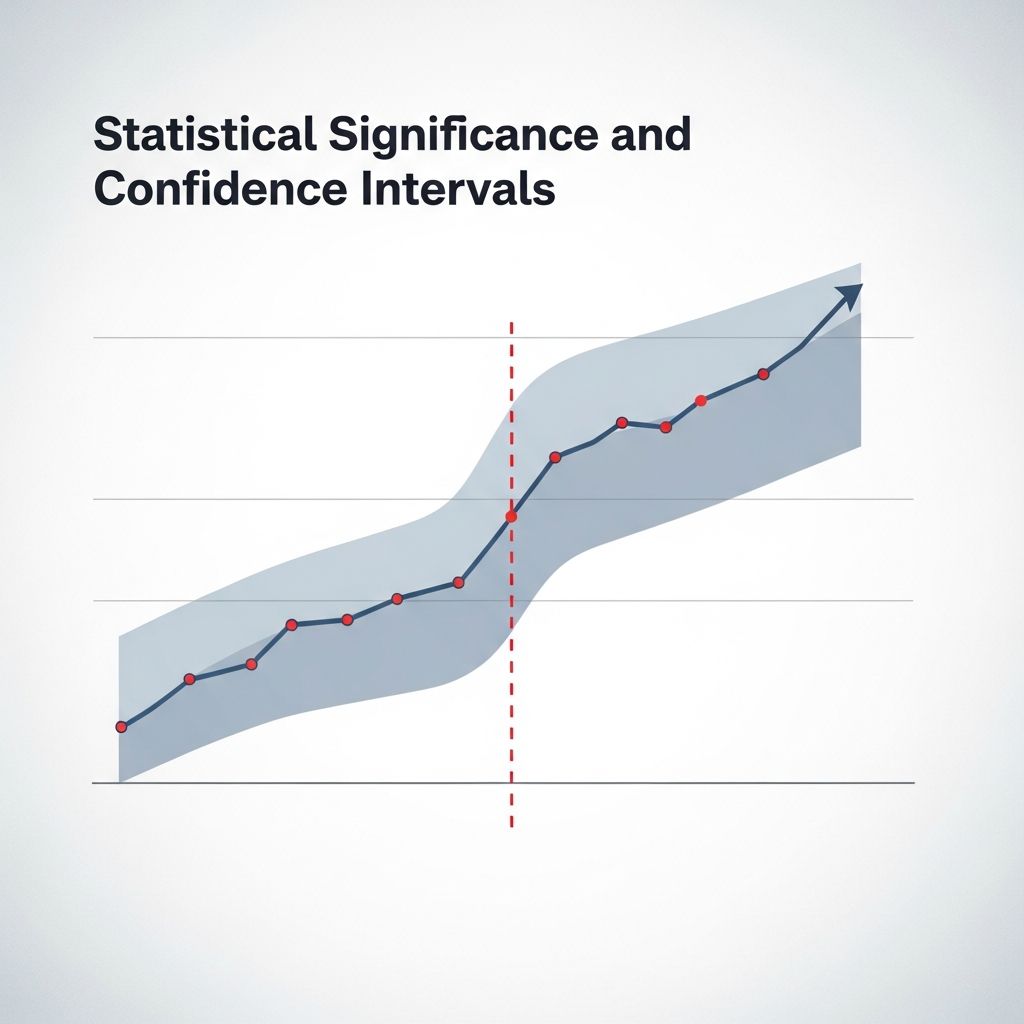

Statistical Significance and Confidence Intervals

Master the relationship between p-values and confidence intervals in statistical testing

Statistical analysis forms the backbone of modern research across disciplines ranging from medicine and psychology to economics and environmental science. Two fundamental concepts that researchers must understand are statistical significance and confidence intervals. While these terms are often used separately, they work together to provide a complete picture of whether research findings are reliable and meaningful.

Defining Statistical Significance in Research Context

Statistical significance serves as a measure of whether observed results in a study are likely due to a real effect or simply random chance. When researchers conduct experiments or observational studies, they typically begin with a null hypothesis—a statement asserting that no relationship or effect exists between variables. The purpose of statistical testing is to determine whether the sample data provides sufficient evidence to reject this null hypothesis.

The significance level, commonly represented by the Greek letter alpha (α), establishes the threshold for decision-making. The most widely adopted significance level is 0.05, meaning researchers are willing to accept a 5% probability of incorrectly rejecting a true null hypothesis. This type of error is called a Type I error or false positive. When a p-value falls below the established alpha level, the result is deemed statistically significant, suggesting the observed effect probably reflects a genuine phenomenon rather than random variation.

The Foundation of Confidence Intervals

A confidence interval represents a range of plausible values for a population parameter estimated from sample data. Rather than reporting a single point estimate, confidence intervals acknowledge the inherent uncertainty present in statistical inference. When researchers construct a 95% confidence interval, they are stating that if the same study were repeated numerous times using the same methodology, approximately 95 of those repeated intervals would contain the true population parameter.

This interpretation reflects what statisticians call the frequentist approach to confidence intervals. It emphasizes the long-run performance of the method used to generate the interval rather than making claims about the probability that any single interval contains the true value. Understanding this distinction proves crucial for correctly interpreting confidence intervals in practice.

Key Components Required for Confidence Interval Calculation

Computing a confidence interval requires four essential pieces of information:

- The point estimate derived from your sample data

- The critical value corresponding to your chosen confidence level

- The standard deviation or standard error of your sample

- The sample size

These components combine using specific formulas that vary depending on whether you are estimating a population mean, proportion, or other parameters. The critical value serves as a multiplier that defines how many standard deviations you must extend from the point estimate to capture the desired confidence level. For normally distributed data, common critical values include 1.96 for a 95% confidence interval and 2.576 for a 99% confidence interval.

Connecting Confidence Intervals to Statistical Significance

The relationship between confidence intervals and statistical significance becomes evident through a straightforward principle: if a confidence interval does not include the null value (typically zero for differences or one for ratios), the result is statistically significant at the corresponding alpha level. Conversely, if the null value falls within the confidence interval, the result fails to achieve statistical significance.

This connection provides researchers with an alternative lens for interpreting statistical tests. Rather than relying solely on p-values, examining confidence intervals offers additional insight into the magnitude and precision of effects. A narrow confidence interval indicates precise estimation, while a wide interval reveals considerable uncertainty about the true parameter value.

Practical Differences Between Confidence Levels and Confidence Intervals

These related but distinct concepts frequently cause confusion. The confidence level represents the percentage—typically 90%, 95%, or 99%—that reflects how often the method successfully captures the true parameter when repeated many times. The confidence interval, by contrast, is the actual range of values calculated from your data.

For example, if a study reports “the 95% confidence interval for the mean difference is 2.3 to 5.7 units,” the confidence level (95%) describes the method’s reliability, while the interval (2.3 to 5.7) represents the specific range estimated from the sample data. These work together: a higher confidence level produces a wider interval, reflecting the increased certainty demanded by the larger percentage.

The Role of Sample Size and Distribution in Confidence Intervals

Two critical factors influence the width and accuracy of confidence intervals: sample size and data distribution. Larger samples produce narrower confidence intervals because increased sample sizes reduce standard error. This relationship follows mathematical principles—as the denominator (related to √n) increases, the standard error decreases, resulting in tighter bounds around the estimate.

The distribution of data also matters significantly. Many statistical methods assume approximately normal distribution, leveraging the central limit theorem which states that sample means tend toward a normal distribution even when the underlying population is not normally distributed. When data deviates substantially from normality, researchers may need to employ alternative approaches such as bootstrap methods or transformations to construct valid confidence intervals.

Interpreting Critical Values in Statistical Testing

Critical values demarcate the boundaries between regions of acceptance and rejection in hypothesis tests. These values depend on three factors: the chosen significance level (alpha), the test statistic distribution (such as the normal, t, or chi-square distribution), and whether the test is one-tailed or two-tailed.

When a calculated test statistic exceeds the critical value in absolute terms, the result falls into the rejection region, leading researchers to reject the null hypothesis and declare the finding statistically significant. The critical value essentially answers the question: “How extreme must my test statistic be to conclude that the effect is real rather than random?”

Distinguishing Between Type I and Type II Errors

Understanding the relationship between significance levels and errors clarifies why confidence intervals and hypothesis tests matter. A Type I error occurs when researchers reject a true null hypothesis—accepting the predetermined alpha level (usually 0.05) means acknowledging a 5% risk of this mistake. A Type II error happens when the null hypothesis is not rejected despite being false in the population.

The power of a statistical test—its ability to detect true effects—increases with larger sample sizes and stronger effects. Confidence intervals indirectly address power concerns by revealing the precision of estimates. A study with very low power might produce wide confidence intervals even when the point estimate suggests a meaningful effect.

Applying Confidence Intervals in Different Research Contexts

Confidence intervals adapt to various statistical scenarios beyond simple means. Researchers can construct confidence intervals for proportions, differences between groups, correlation coefficients, and regression parameters. Each application uses the same underlying principle but requires different formulas reflecting the nature of the parameter being estimated.

In medical research, confidence intervals around treatment effect estimates help clinicians understand not just whether a treatment works, but how much improvement patients might realistically expect. In quality control and manufacturing, confidence intervals on process parameters guide decisions about whether adjustments are necessary to meet specifications.

Assumptions Underlying Valid Confidence Intervals

Confidence intervals rest on several foundational assumptions. The sample must be representative of the population from which it was drawn—ideally through random selection, though practical considerations sometimes necessitate other sampling strategies. Additionally, the specified distribution used to calculate the interval must accurately reflect the sampling distribution of the estimator.

When these assumptions are violated, confidence intervals may be misleading. Non-random sampling might introduce bias, while using an inappropriate distribution could produce intervals with coverage properties different from their stated confidence level. Researchers must assess whether their data and methods satisfy these requirements.

Borderline P-Values and Confidence Interval Insights

Situations arise where p-values hover near the traditional 0.05 threshold, creating ambiguity about whether results should be declared statistically significant. Examining the corresponding confidence interval provides valuable perspective in these borderline cases. If the confidence interval is very wide and includes the null value, the result is clearly not significant despite a p-value close to 0.05. Conversely, a narrow confidence interval excluding the null value supports significance even if the p-value barely falls below 0.05.

Common Misconceptions About Confidence Intervals

A widespread misinterpretation claims that a 95% confidence interval means there is a 95% probability the true parameter lies within the calculated interval. This statement, while intuitively appealing, misrepresents the frequentist interpretation. The true parameter either exists within the interval or it does not—probability does not apply to a fixed unknown value. Rather, 95% refers to the long-run success rate of the method if repeated many times.

Another common error involves reporting confidence intervals without explaining their meaning to non-technical audiences. Simply stating a range of values leaves readers confused about what the interval represents and why it matters for interpreting research findings.

Choosing Appropriate Confidence Levels for Your Research

While 95% confidence represents the standard in most fields, the appropriate level depends on the context and consequences of errors. Fields where mistakes carry severe consequences, such as pharmaceutical development, often employ 99% confidence intervals. Conversely, exploratory research or quality control applications might use 90% confidence. The trade-off remains constant: higher confidence levels produce wider intervals.

FAQ: Addressing Common Questions

What exactly is the null value in a confidence interval?

The null value represents the parameter value indicating no effect or difference. For comparing means, the null value is zero (meaning no difference between groups). For comparing proportions or odds ratios, the null value is one. If the confidence interval excludes the null value, the result is statistically significant.

Can confidence intervals be calculated for any statistical parameter?

Yes, confidence intervals apply to most population parameters, including means, proportions, differences between groups, correlation coefficients, and regression coefficients. Different parameters require different formulas, but the underlying logic remains consistent.

How does sample size affect confidence interval width?

Larger samples consistently produce narrower confidence intervals. This relationship follows mathematical principles as standard error decreases with increased sample size, resulting in tighter bounds around the point estimate.

When should I use confidence intervals instead of p-values?

Both tools provide complementary information. Confidence intervals offer insight into effect magnitude and precision, while p-values address whether effects likely differ from chance. Modern best practices recommend reporting both together.

What does a very wide confidence interval indicate?

Wide confidence intervals reveal substantial uncertainty in the estimate, potentially due to small sample size, high variability in the data, or both. This uncertainty must be considered when interpreting research findings.

References

- Understanding Confidence Intervals: Easy Examples & Formulas — Scribbr. https://www.scribbr.com/statistics/confidence-interval/

- Confidence interval — Wikipedia. https://en.wikipedia.org/wiki/Confidence_interval

- Confidence Intervals: Introduction to Statistics — JMP. https://www.jmp.com/en/statistics-knowledge-portal/inferential-statistics/confidence-intervals

- Confidence Intervals – Finding and Using Health Statistics — National Library of Medicine, National Institutes of Health. https://www.nlm.nih.gov/oet/ed/stats/02-950.html

Read full bio of medha deb